AI Should Support Learning, Not Undermine It

AI is here to stay, but that does not mean every use of it is good for students. Why Vector is taking a more deliberate approach to educational technology.

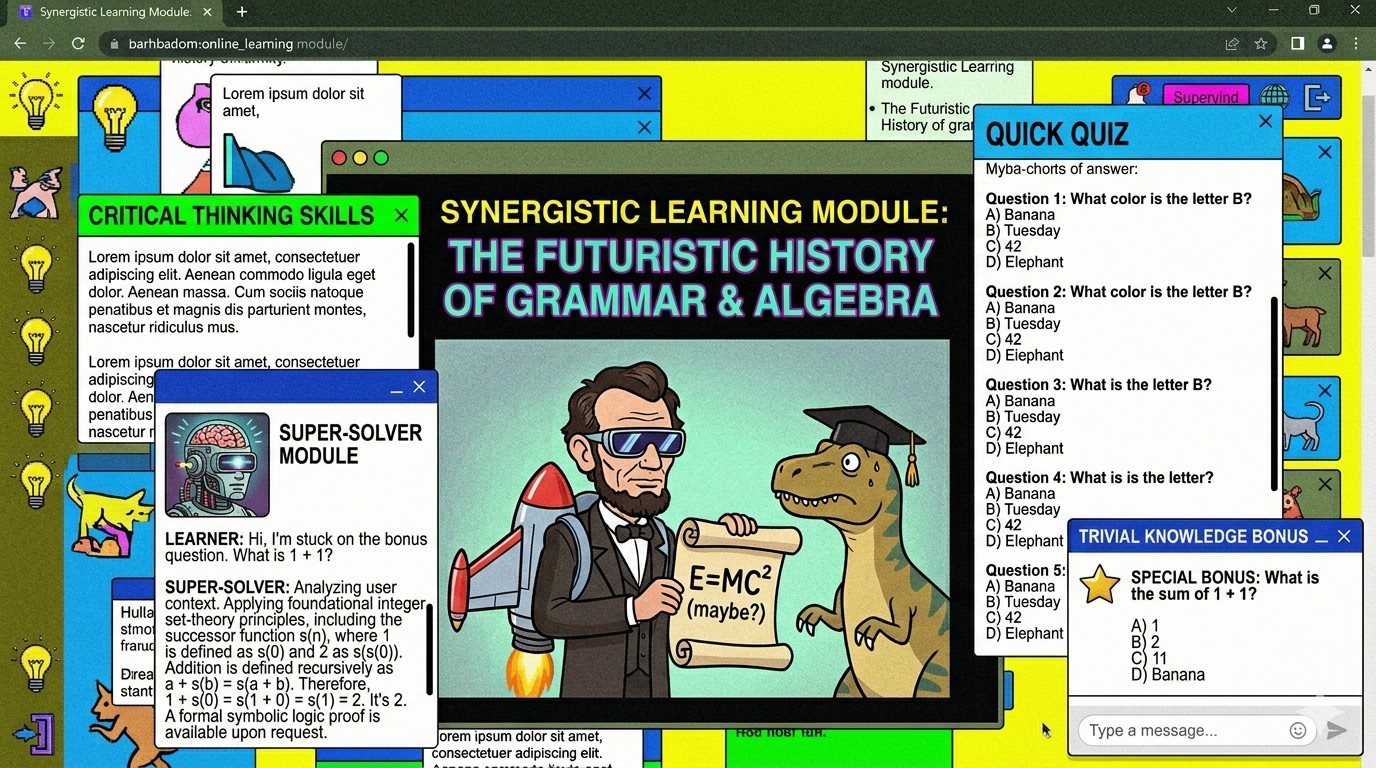

^ The cover image above is AI slop

AI is here to stay, and we are not against it. We think students should learn how to use it well, because it is going to shape how people work, study, and solve problems. But there is a difference between using AI well and relying on it. What worries us is not the tool itself. It is how easily learning can shift from understanding things properly to simply getting answers faster, and how that shift can be mistaken for progress.

That's why we feel that the conversation around AI in education is still a bit incomplete. We tend to place the focus on what AI can do, not on what repeated reliance on it may slowly undo. Finishing work faster can look like progress. However, learning has never been just about speed. It also takes effort, reflection, memory, frustration, and the repeated process of trying to make sense of something all by yourself. We fear that when too much of that gets removed, the student may still produce answers, but the learning underneath becomes much thinner than it appears.

This is part of why a recent paper, Your Brain on ChatGPT: Accumulation of Cognitive Debt when Using an AI Assistant for Essay Writing Task, stood out to us. The paper found signs that people using LLM help were less mentally engaged and felt less ownership over their work than those relying on their own thinking or regular search tools. While it looks at essay writing rather than every kind of schoolwork, the broader idea is still difficult to ignore. When a tool reduces the effort needed to do something, it may also make it easier to skip the thinking that the entire learning experience depends on.

Beyond Syllabus Alignment

Many education platforms like to present MOE syllabus alignment alongside the most advanced technology, as though that alone proves educational value. We think alignment is important, and we use syllabus-aligned materials too. But to us, that is only the starting point. A product can be aligned to the syllabus yet still build shallow habits. It can look polished, be marketed well, and have a whole plethora of the most cutting edge features, but underlying that is a concerning trend of training students to reach for quick help before they have really tried to think through a problem themselves. To us, an impactful product is not one that simply aligns to the syllabus, but whether it helps students learn in a way that is healthy and sustainable.

That is why we have tried to be selective with how we build Vector. Features like snapping a photo of a question and getting instant help are obviously convenient. But convenience alone is not a good enough reason to justify a design choice. In education, design choices shape habits. The easier it becomes to get help immediately, the easier it becomes to stop thinking for just a bit longer on your own. A feature can feel helpful in the moment while still building dependence over time. For us, that trade-off is concerning.

What We Are Building Toward

Some AI companies may look at Vector and say we are not technical enough. Some tuition centres may say we cannot replace them. They are both right. That's because we are not trying to be either, and we are not trying to be everything. We are not trying to build the most impressive AI product or turn education into a showcase of automation. We are also not trying to replace good teachers. Good tutors who know how to guide, challenge, and encourage students well will always be valuable. At the same time, we also know that many students are paying high prices for support that is inconsistent, over-marketed, ands built from recycled materials dressed up as something new.

We want to build support students find useful without making them overly reliant on it. We hope to make studying more manageable and accessible, without taking away the effort that gives learning its value in the first place. Features that look impressive in a demo does not always mean they are good for students. We constantly remind ourselves how important it is to build with restraint, and recognise that not every shortcut is helpful in the long run.

In the end, we do not think educational technology should be judged only by how advanced it looks or how fast it produces results. It should also be judged by what kind of learner it is shaping. If something makes schoolwork easier but nurtures students that are less able to think for themselves, then that does not align with the problem we are trying to solve.

That is the direction we want Vector to take. Less hype, less automation just for its own sake, and fewer features that create dependence while disguising themselves as progress and innovation. We want to make something reliable, accessible, thoughtful, and genuinely useful. Something we ourselves would use as students and would give our children. Something students return to not because it does the thinking for them, but because it helps them keep going without feeling lost or overwhelmed.

References

Kosmyna, N., Hauptmann, E., Yuan, Y. T., Situ, J., Liao, X.-H., Beresnitzky, A. V., Braunstein, I., & Maes, P. (2025). Your brain on ChatGPT: Accumulation of cognitive debt when using an AI assistant for essay writing task (arXiv:2506.08872). arXiv. https://doi.org/10.48550/arXiv.2506.08872